|

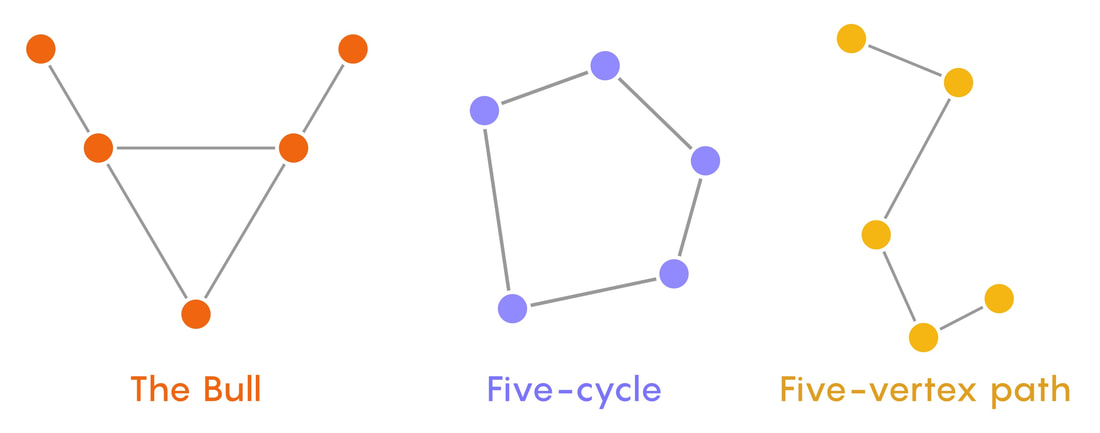

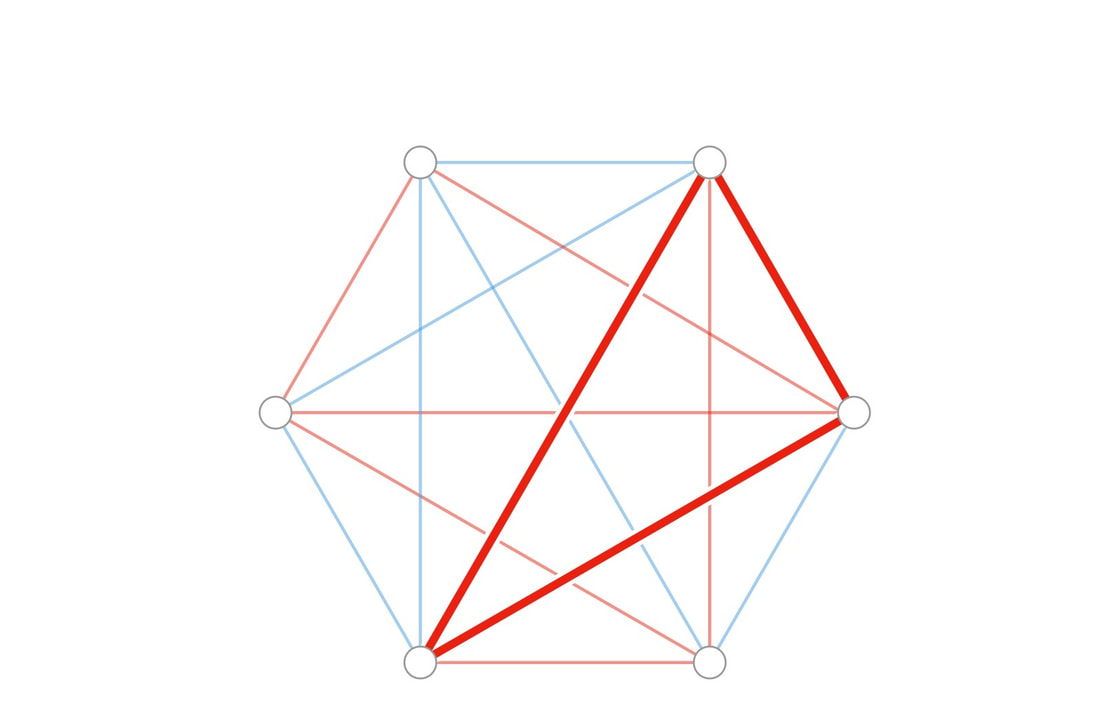

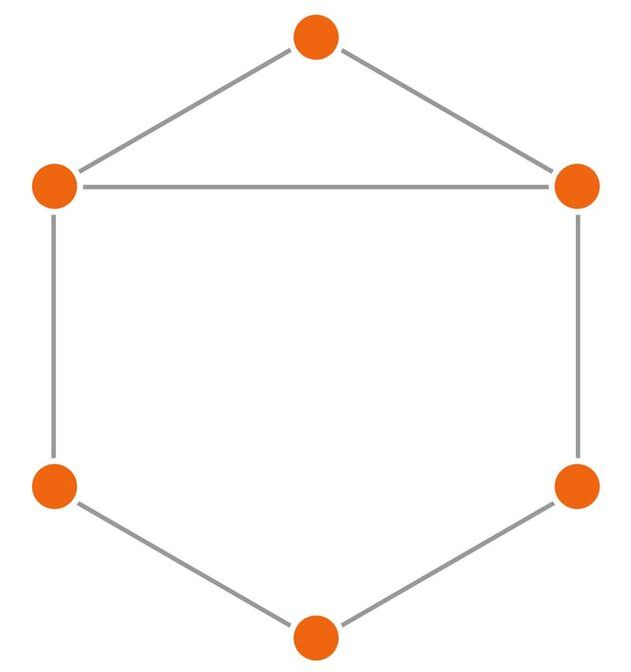

Nitya Nigam Graph theory is having a great year. Following the proof of lower bounds on Ramsey numbers (link is to our coverage) from Asaf Ferber and David Conlon in late 2020, a new proof has proved a special case of the Erdős-Hajnal conjecture. Essentially, the conjecture implies that forbidding certain structures at a small scale impacts the macroscopic structure of the graph, which has powerful consequences for graph theory (we'll go into more detail later in the article). The new proof, published in February by Maria Chudnovsky and Paul Seymour of Princeton University, Alex Scott of the University of Oxford, and Sophie Spirkl of the University of Waterloo, proves two special cases of the conjecture, so although a complete proof still evades us, significant progress is being made. More formally, the Erdős–Hajnal conjecture states that if a graph does not contain a certain structure (known as an induced subgraph), then the graph will inevitably have either a large clique or a large independent set. Let's define these terms: Clique: A subset of vertices in a graph such that every vertex is connected to every other member of the subset via at least one edge. Independent set: A subset of vertices in a graph such that every vertex in the subset is not connected to any of the other vertices in the subset. Large: A large clique or independent set in a certain graph with V vertices is defined as a clique or independent set with more vertices than would arise if a random graph with V vertices was drawn.  Chudnovsky and her colleagues proved the conjecture for two special cases. One was the specific forbidden induced subgraph of a "five-cycle" - a set of 5 vertices where each vertex is connected to exactly two others in the set. The other was a "five-cycle with a hat" - a set of 6 vertices similar to a 6-cycle, but with an extra edge joining two vertices (shown on the left). While most conjectures are formed with mathematical consensus agreeing they are likely to be true, the mathematicians working on this problem actually expected that they would disprove the conjecture: that is, that it would be false. “We were all a little shocked,” said Chudnovsky. Prior to this paper, the conjecture had only been proven true for induced subgraphs of four vertices or fewer, as well as another special 5-vertex subgraph ("The Bull" below) by Chudnvosky and Shmuel Safra in 2005. This leaves just the five-vertex path as the last possible five-vertex induced subgraph, and the clear next step for eventually proving the full conjecture. “The five-vertex path has to be next. If you can’t do that, you’re still nowhere,” said Paul Seymour. We're excited to see what developments are still to come!

0 Comments

Malhar Rajpal In my last article, I talked about László Lovász, one of this year’s winners of the prestigious Abel Prize. In this article, I will talk about the background and accomplishments of this year's other recipient, Avi Wigderson.

On 9th September 1956, Avi Wigderson was born in Haifa, Israel. He graduated with a B.Sc. in Computer Science from the Technion (The Israeli Institute of Technology) in 1980. He then went to Princeton University in the USA for his graduate studies, where he completed his PhD in computational complexity under Richard Lipton. Wigderson's major contributions are in the field of computational complexity, which is the study of how quickly computer problems can be solved. You may have heard of the P=NP problem, which is the fundamental question of computational complexity. Computer problems are designated as P (meaning they can be easily solved in polynomial time) or NP (meaning it would take a very long time to solve the problem with known algorithms, but the answer can be verified quickly). Computational complexity is thus founded on the question of whether all the hard to solve problems in the NP set can be reduced to problems that are easy to solve and are in the set P, and thus whether P = NP. This is an unsolved and hotly debated question in mathematics. Wigderson made remarkable developments in this field. One of his biggest achievements is researching how randomness can be used to solve computational problems in a quicker period of time. He showed that if a computer can “flip coins” during the computational process, rather than if the computer chooses each decision, then a solution to a computational problem can be reached in a quicker period of time. This was massive, and it showed that randomness could be used to facilitate a great deal of tasks in the computational world. Dealing with randomness, however, increases the chance of an error in the solution, and therefore Wigderson, with Noam Nisan and Russell Impagliazzo, showed that for any algorithm that uses coin flipping to solve a difficult problem, there is another algorithm that is nearly as fast that uses deterministic (non-random) methods to reach the same solution, under certain conditions. This finding was a huge accomplishment, as it proved that the problem class known as “BPP” in the computer science world was actually the same as the complexity class P, revolutionising the way random algorithms were perceived. Perhaps what Wigderson is most famous for is his co-discovery of the zig-zag product in 2000. The zig-zag product is an innovative finding that links several different fields such as group theory, graph theory, and complexity theory. Very simply put, the zig-zag product of regular graphs is when one takes a large graph and a smaller graph and produces a new graph with the approximate size of the large graph but the degree of the smaller one. According to Wikipedia, it is “a method of combining smaller graphs to produce larger ones used in the construction of expander graphs”. There are, of course, several intricacies to this finding, however they are beyond the scope of this article. The zig-zag product is extremely powerful and carries a huge number of applications, such as finding the optimal way to solve a maze problem. Although he has co-authored over 100 research papers, it would be impossible to cover all of his brilliant ideas in one article. Nonetheless, these achievements alone are truly extraordinary. Malhar Rajpal A few weeks ago, two extremely renowned mathematicians, Avi Wigderson and László Lovász, won the prestigious Abel Prize. Becoming an Abel Prize laureate is not an easy feat, since it is only awarded to the most accomplished mathematicians in a given year. Known colloquially as the Nobel Prize pf mathematics, it has a substantial cash prize of 7.5 million Norwegian Kroner. In this article, I will talk about one of the 2021 Abel Prize winners, László Lovász and his work.

On March 9, 1948, Lovász was born in Budapest, Hungary. He was an extremely talented mathematician from an early age and managed to win three gold medals and a silver medal at the renowned International Mathematical Olympiad, the most competitive and largest high school math competition in the world, where countries send teams of their six best mathematicians to compete. Winning a medal is not an easy feat with only around the top 8% of already brilliant participants receiving a gold medal. Achieving four medals, thus, is quite an amazing achievement and truly showed Lovász’s outstanding mathematical ability at a young age. As a prodigious mathematician, he had the opportunity to meet and learn about game theory from Paul Erdös in his youth. After studying mathematics at the undergraduate and postgraduate level at Hungary's top universities, Lovász became increasingly prominent as a researcher in the field of mathematics and computer science. He worked under the supervision of Tibor Gallai through his doctoral research in the 1970s. He also worked closely with Erdös to develop methods to complement Erdös’ graph theory techniques. One of his largest accomplishments was developing the Lovász local lemma in graph theory which states that as long as a certain number of events are ‘mostly’ independent from each other, and aren’t individually very likely, then there will be a probability that none of them occurs. Of course, there are several intricacies to this lemma that are beyond the scope of this article but it was a vital development because it led to breakthroughs in creating existential proofs for rare graphs and is used in the probabilistic method. Relating to graph theory, he also contributed to the formulation of the Erdös-Faber-Lovász conjecture in 1972 which stated that ‘If k complete graphs, each having exactly k vertices, have the property that every pair of complete graphs has at most one shared vertex, then the union of the graphs can be properly colored with k colors’. This conjecture remains unsolved, however, in 2021, a proof of the conjecture was made for all sufficiently large values of k by a team of five researchers. He also proved Kneser’s conjecture in 1978, where Martin Kneser conjectured in 1955 that the chromatic number (the minimum number of colours needed to colour the nodes of a graph such that no two nodes that share an edge have the same colour) of the Kneser graph K(n, k), for n >= 2k is exactly n-2k+2. Lovász proved it by using topological methods which was significant because it made developments to the huge field of topological combinatorics. Further, in 1982, Lovász worked with Arjen Lenstra and Hendrik Lenstra to create the LLL algorithm which is a polynomial time reduction algorithm. This algorithm was a huge breakthrough for Lovász since it led to massive developments in cryptography and polynomial factorisation, the basis of RSA cryptography. This is, by no means, an exhaustive list of Lovász’s numerous accomplishments, and Lovász has made a considerable impact in the fields of mathematics and computer science over the last five decades. He has been rewarded with several prestigious awards and has worked in numerous outstanding institutions including Yale University, Microsoft Research Center, and the Hungarian Academy of Sciences. His Abel Prize for significant contributions to theoretical computer science and discrete mathematics is therefore fully deserved. Nitya Nigam Since the advent of modern computing in the 1950s, computers have played an essential role in mathematical research. They're often used to test conjectures, as they can iterate through long lists of numbers extremely quickly to check whether they satisfy the conditions that have been predicted. They are also used to find specific types of numbers, such as Mersenne primes (you can even join the Great Internet Mersenne Prime Search yourself). However, coming up with conjectures has long been the work of mathematicians themselves. A good mathematical conjecture states something profound and useful within the field. It must be interesting enough to prompt investigation, but not so niche as to have very narrow applications. Getting computers to strike this balance is therefore a tall task.

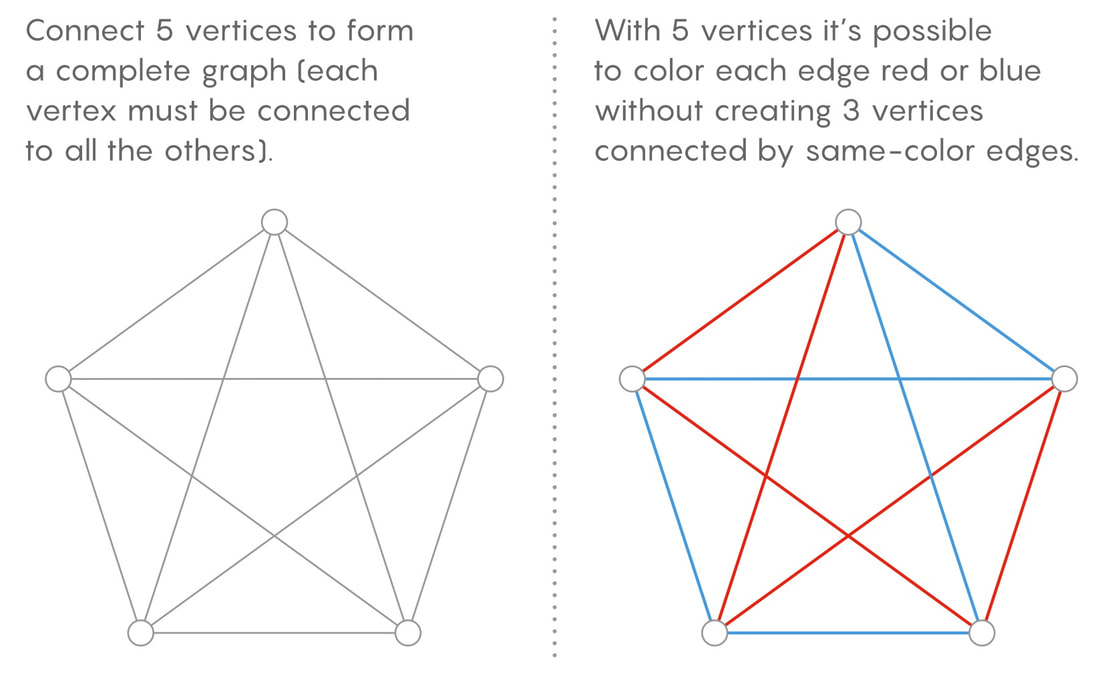

However, researchers at the Technion in Israel have created an automated conjecturing system called the Ramanujan Machine, named after the famous mathematician Srinivasa Ramanujan. The software has already conjectured original formulas for several mathematical constants (published in this Nature article) including Catalan's number and pi. The software works by leveraging the fact that many such constants are equal to continued fractions (fractions where the denominator is the sum of two terms, one of which is a fraction with a denominator that also contains a fraction, and so on until infinity). Continued fractions have been of mathematical interest both for their aesthetics and what they reveal about constants' fundamental properties. The software works by first finding continued fraction expressions that seem to equal universal constants by choosing arbitrary constants and expressions, then computing each side to a certain level of precision and seeing if they approach each other. If they seem to approach each other, they are computed to higher precision to ensure that it is not a coincidence. Since formulas already exist to compute pi and other constants to an arbitrary level of precision, the only thing in the way of making sure the sides match is computing time. So far, the software has conjectured a formula for Catalan's constant that allows for its fastest computation yet. This is an important milestone for computational mathematics, not just because it shows promise for developing faster methods to compute other constants, but because these automated conjectures may be used to reverse-engineer more theorems, further fueling mathematical innovation, Nitya Nigam After over 70 years of effort, mathematicians have finally made headway on one of graph theory’s most confounding puzzles. In September (sorry for the article backlog, we’ve been swamped), Asaf Ferber of UC Irvine and David Conlon of Caltech, published a proof that provides the closest approximation yet for “multicolour Ramsey numbers”, which are measures of how large graphs can get before they start to contain patterns. Graphs are groups of nodes (points) and edges (lines connecting the points) - Malhar’s articles on search algorithms make extensive use of these mathematical constructs. This development gives mathematicians a deeper understanding of the relationship between order and randomness in graphs, which is of fundamental importance to the field. As described by Maria Axenovich of the Karlsruhe Institute of Technology in Germany, “there are always clusters of order [in graphs], and the Ramsey numbers quantify it.” More specifically, the Ramsey number for a given pair of parameters (number of colours and clique size) is the minimum number of vertices a perfect graph can have for which it is impossible to colour the graph without having a monochromatic clique of the specific clique size. There’s a lot of unfamiliar terminology in this definition, so let’s unpack some of the words that are probably new to you in this context: Colour: In the context of Ramsey numbers, mathematicians are interested in finding out the ways in which the edges of a graph can be coloured. Colouring is essentially just a simple way to separate edges into distinct groups. Clique: A subset of vertices in a graph such that every vertex is connected to every other member of the subset via at least one edge. Monochromatic clique: A clique where all of the edges are the same colour. Perfect graph: A perfect graph of size k is a graph with k vertices where every vertex is connected to every other vertex by exactly one edge. Now that we understand these terms, let’s take a look at an example (diagrams from Quanta Magazine): Ramsey numbers are difficult to calculate because the complexity of a graph increases extremely quickly as vertices are added. There are simply too many ways to apply colours for these numbers to be calculated manually, and the task quickly becomes too complicated even for computers. In 1935, Paul Erdos and George Szekeres proved that the lower and bounds on 2-colour Ramsey numbers were sqrt(2)^t and 4^t respectively, where t is the clique size. Clearly, there is a huge difference between these bounds, especially as t gets large.

Using a mixture of deterministic and probabilistic methods (described both in this article and in the original paper - let us know in the comments if you’d like us to give you a more basic explanation of their methods), Ferber and Conlon improved Erdos’ lower bounds for the three- and four-colour cases from sqrt(3)^t and sqrt(4)^t = 2^t to 1.834^t and 2.135^t. While these not may seem like huge differences, these new bounds prove that exponentially larger 3- and 4-coloured graphs exist which don’t have monochromatic cliques of the specific sizes. Essentially, they established that disorder is present in larger graphs than was previously thought. Let us know in the comments if you'd like to learn more about Ramsey numbers of graph colouring! Nitya Nigam Get your power tools out and start building, because scientists from the University of Queensland in Australia have proven that time travel is theoretically possible after resolving a logical paradox. Germain Tobar and Fabio Costa used mathematical models to align classical dynamics (Newtonian physics) with general relativity (proposed by Einstein). You can find their full paper here, but we’ll be summarising its findings in the rest of this article.

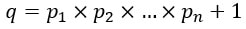

You may have heard of the grandfather paradox, which is a commonly cited logical problem with the concept of time travel. If someone were to go back in time and kill their grandfather, classical dynamics states that the events following the grandfather’s demise would result in the time traveller not being born. However, relativity allows for the time traveller to use a “time loop” (formally called a closed timelike curve, or CTC) to go back in time and kill their grandfather. CTCs are essentially results of solutions to the mathematical equations of relativity. The methods used by Tobar and Costa reconcile this maths with that of classical dynamics. The two scientists used the model of the COVID-19 pandemic to work out whether the two conflicting theories could coexist. What they found is that events would realign themselves so that even if someone went back in time from t=0 and stopped COVID-19 from infecting a human at, say, t=-10000, the end result of the pandemic at t=0 would still occur. “In the coronavirus patient zero example, you might try and stop patient zero from becoming infected, but in doing so you would catch the virus and become patient zero, or someone else would,” explained Mr Tobar. Essentially, regardless of what you did as a time traveller, events would recalibrate themselves without you, meaning that the pandemic would occur and give your original self a reason to go back in time and stop it. Mr Tobar summed this up by saying: “The range of mathematical processes we discovered show that time travel with free will is logically possible in our universe without any paradox.” Dr Fabio Costa added: “The maths checks out - and the results are the stuff of science fiction.” This is truly some remarkable research, but readers must keep in mind that this paper has only been through one round of peer review, so there may be errors in the paper that haven’t been caught just yet. Furthermore, this only shows that there is a possibility for logically consistent time travel, not that this definitely can exist. But this is definitely a win for all the sci-fi nerds out there. Long Him Lui The twin prime conjecture is one of the most famous unsolved problems in the field of mathematics known as number theory. Number theory, as the name suggests, deals with numbers, more specifically the relationships between different types of numbers. Some examples are: One of the most notorious groups of numbers that mathematicians deal with are the prime numbers. Prime numbers are tricky to deal with because there is no clear relationship between the nth term and the n+1th term, so we cannot write out a general formula for the set of n primes. The unpredictability and abstractness of prime numbers are precisely what attract mathematicians to the twin prime conjecture, with world-renowned mathematicians such as James Maynard and Terence Tao having contributed significant work to the attempt to solve this mathematical puzzle. Before diving any deeper into the twin prime conjecture, we first need to define the term conjecture. A conjecture is a proposition that is suspected to be true based on preliminary evidence in its favour, but for which no proof or disproof has been found. After a conjecture is proved to be true, it is considered a theorem, becoming a non-self-evident statement that has been proven to be true on the basis of generally accepted axioms (statements that are accepted to be true) or previously established mathematical proofs and other theorems. A twin prime pair is a set of two prime numbers that have a difference of two. Mathematicians typically label differences between prime numbers using the term “prime gap”, so the definition of a twin prime is more commonly heard as: a set of two primes that have a prime gap of two. For example, (41,43) would be considered as a twin prime pair. Logically speaking, twin prime pairs become increasingly hard to detect as we ascend into larger number ranges, but this is to be expected. The general tendency is that gaps between adjacent prime numbers increase as numbers get larger, since there are a larger number of factors that the number can be divided by, resulting in a diminishing chance for a number to be prime. This results in twin primes becoming increasingly rare as we proceed into larger and larger numbers. However, we cannot examine the twin prime conjecture if we cannot prove that infinite primes exist, since the twin prime conjecture suggests that there are infinite twin prime pairs. Even though there is no pattern showing the correlation between all primes, we are sure that there exist an infinite number of primes. The proof of infinite primes can be shown by a proof by contradiction. Our new number q is clearly larger than any prime number, so it is not equivalent to any of them. Since p1 to pn constitutes all prime numbers, q cannot be prime. Since non-prime numbers can be written as a product of primes, it must be divisible by at least one of our finitely many primes pn (where ). However, when we divide q by pn, we obtain a result with a remainder of 1, which contradicts the original assumption that there are a finite number of primes. Therefore, we can say that there exist an infinite number of primes. During the computation of this conjecture, a very common tool that mathematicians use is the function known as the logarithmic integral function (li_k(x)), which has many uses in physics. The reason why this is used in the twin prime conjecture is because the logarithmic integral function is considered a very good approximation for the Prime Counting Function Π(x), which is a function used to calculate the total number of prime numbers in a certain set of natural numbers. The graph shows the total number of primes that exist in a set of N integers. The y axis shows the number of primes and the x axis shows the total number of numbers N. The blue line shows the prime count according to the Prime Number Theorem, while the red line shows the prime count according to the logarithmic integral function. Notice that the approximation is very good for any small to medium sized set of integers from 0 to around 50000, but starts to have a noticeable discrepancy from large sets above 50000 integers. However, a good thing about this estimation is that it is a consistent underprediction, which makes it convenient to adjust for discrepancies. In general, this theorem is able to reciprocate a generalized version of the twin-prime conjecture for any prime gap by taking the average distance between a prime number and a sample space of integers combined with the Prime Number Theorem, which estimates the number of primes within N integers. By taking a product of these two values, we can estimate the average prime gap within N integers.

Lots of work is still being done on the twin prime conjecture. Most recently in 2013, a mathematician called Yitang Zhang, proved that there are an infinite number of prime numbers that differ by 70 million or less. Even though 70 million is still quite far from two, it is considered a huge breakthrough in the mathematical world, and hopefully more research can be completed on this conjecture for it to become one of the most beautiful theorems mathematics has ever seen. Sayonee Das As far back as the time of the ancient Greeks, mathematicians have studied whole numbers and their properties. This branch of maths is called number theory, and is one of the oldest mathematical fields. To this day, there are open questions in number theory, which demonstrates its complexity.

One source of such open questions is the study of perfect numbers. A perfect number is a whole number (integer) which equals the sum of its proper divisors. For example, 6 is divisible by 1, 2, and 3 and it’s also equal to their sum as 1 + 2 + 3 = 6. Likewise, 28 is divisible by 1, 2, 4, 7, and 14 and it’s equal to 1 + 2 + 4 + 7 + 14 = 28. The simplicity of what defines perfect numbers makes them easily understandable to non-mathematicians, so their study intrigues mathematicians and non-mathematicians alike. Perfect numbers were first studied around 300BC when Euclid first proved that if (2^p − 1) is prime then (2^(p−1))(2^p − 1) is perfect. The first four perfect numbers were the only ones known to early Greek mathematicians. Throughout history, many more mathematicians played a role in bettering our understanding of perfect numbers. One of them was Ibn al-Haytham, who first suggested that the opposite (converse) of Euclid's theorem was also true: not only do numbers of the form (2^p − 1) generate perfect numbers, but all even perfect numbers are generated this way. This was eventually proven by Euler in the 1700s. A clear, animated explanation of this proof is linked here. Today, 51 perfect numbers have been discovered, with the largest having 49,724,095 digits - over three million more digits than the 50th perfect number! Even though new even perfect numbers are discovered almost every year, some questions still remain:

Infinite even perfect numbers Sometimes we see prime numbers that have the form (2^p − 1), where p is a prime number. These numbers are called Mersenne primes. As mentioned above, Euclid proved that if (2^p − 1) is a Mersenne prime, then (2^(p−1))(2^p − 1) is a perfect number. From this, we can see that the questions of the infinitude of Mersenne primes that of perfect numbers are linked. By proving the existence of infinite Mersenne primes, we can prove the existence of infinite even perfect numbers. Currently, no proof in either direction - that there are either a finite or an infinite number of Mersenne primes - exists. Mathematical consensus, based on empirical data and heuristic methods using harmonic series, is generally that there are likely to be an infinite number of Mersenne primes, and therefore even perfect numbers, but a concrete proof of this remains to be found. Odd perfect numbers Whether or not odd perfect numbers exist hasn’t been proven either. However, people have proved properties that odd perfect numbers must have, if there are any. Although the requirements for odd perfect numbers have become more demanding, they’re not contradictory and so it remains logically possible that such numbers exist. Yet, most experts believe that odd perfect numbers don’t exist. Wikipedia lists the properties that odd numbers must have; if you were to look at them, their rigidity would surprise you - I know I was! Among the many others, one property listed is that an odd perfect number must have at least 300 digits. Computers have been testing odd numbers to see it they are perfect for decades now, but have yet to find any odd perfect numbers. Although computer testing would be a valid way to disprove the conjecture that no odd perfect numbers exist, as a counterexample would disprove the claim, if there are indeed no perfect numbers, computer testing will not provide us with any real knowledge. Even if we test a vast number of odd numbers, we will not be able to conclusively state that the next odd number isn't perfect, so a concrete mathematical proof is required. Let us know in the comments if you enjoyed this discussion of perfect numbers, and what you'd like to see us talk about next! Long Him Lui (guest writer) With STEM careers increasing in popularity as we enter the new decade, many students may choose to pursue a science-related major in university. Majors from mechanical engineering to biochemistry provide a vast range of topics to choose from. However, there is one overarching major offered by universities that links all the science majors together, even having applications in the human sciences. Conveniently, it is also present in the STEM acronym, but is often forgotten. That subject is mathematics, and today, I will be explaining why I believe that mathematics is one of the most underrated university majors out there. As someone who has gone through the university application process and is planning to study mathematics in university this upcoming September, I hope to share the advantages and disadvantages of pursuing a mathematics degree as well as tips for the application process.

Upon telling people about my desire to pursue mathematics as my undergraduate degree, two FAQs were:

The job opportunities that a mathematics degree can offer are endless. A common misconception is that mathematics majors mostly go into higher education, eventually teaching in educational institutes like universities, but in fact, it is quite the opposite. Most mathematics majors choose to work in other industries, such as finance, biotechnology, and software development to name a few. This is because mathematics is an extremely broad and flexible subject that can be easily applied in other areas of knowledge. Of course, there are parts of pure mathematics such as number theory which are limited to mathematics itself, but there are many fields that stretch into other subjects. Many university courses often have a compulsory mathematics course for their specific subject. For example:

However, a huge disadvantage applying as a mathematics major is that it is one of the hardest majors, due to the starting benchmark being very high. You need to demonstrate underlying mathematical ability to apply for the major. Unlike majors such as architecture and engineering, mathematics is a subject that is taught from a very young age, so universities expect maths applicants to have a very solid foundation in the subject. The top schools in the UK require applicants to take aptitude tests (MAT or STEP) to determine that the applicant’s level of mathematics meets their standard. This may come before their offer (such as the MAT for Oxford and Imperial), or as part of the offer (STEP). Unlike aptitude tests for other subjects (such as ENGAA for engineering), which state that the grade obtained on the test does not greatly affect whether an interview or offer is given, the results of the mathematics aptitude tests play a huge role in the university offer. In addition, the mathematics aptitude tests are rated as the hardest out of all the UK university aptitude tests. Therefore, if you are a mathematics major applying to the UK, it would be a great idea to start practicing some aptitude test problems and mathematics interview questions during the summer leading into your final/senior year. For some of the top universities in the UK, the typical offers for mathematics are:

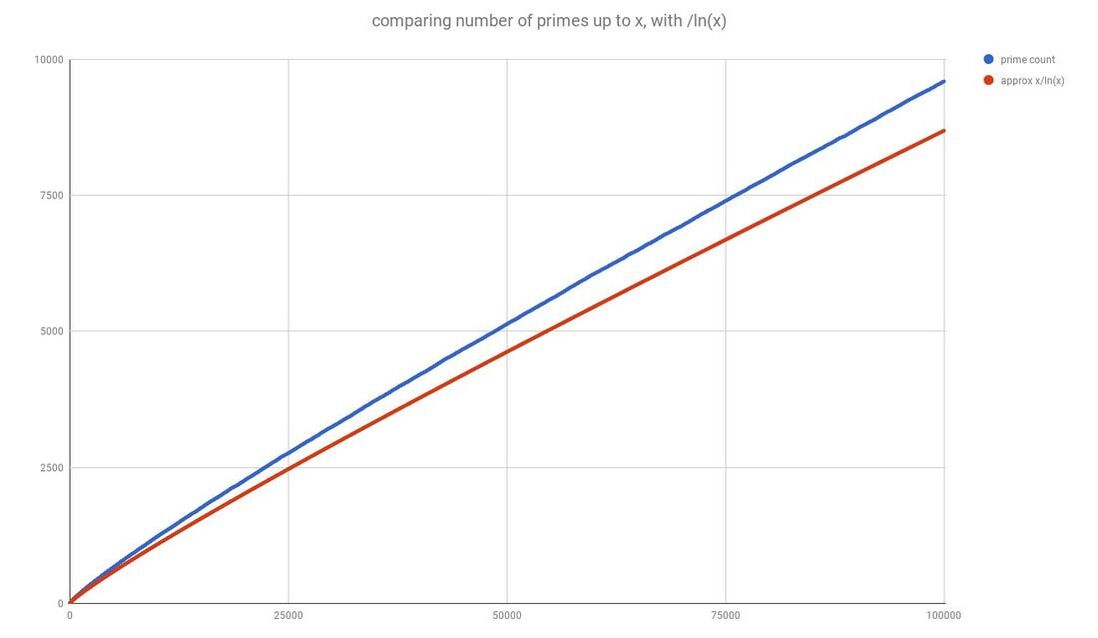

The application process for a mathematics major is another aspect that makes the major difficult. Unlike other majors, it is hard to find internships that are solely mathematics focused. This is because such roles require further education, which are better suited for bachelor/master/PhD students. Therefore, the best approach would be to bolster school-related mathematics projects to complete your CV. Apart from getting the top grades for the exam board you are doing, creating some mathematics coursework (such as the EE for IBDP students) would serve as a very good topic for your personal statement. Maximizing success at mathematics competitions and Olympiads would act as verification for your mathematical abilities. Mention any mathematics tutoring, coaching, or mathematics-related summer camps you have done. All these things add up to complete a very well-rounded mathematics CV. However, the most important thing is to show your passion towards the subject, and this goes for any university major. Show your love for mathematics by mentioning an interesting proof that you came across in your further reading in your personal statement. With all of the above, along with good aptitude test scores, you should be able to secure an offer or interview spot, where the next step would be to show your passion and skills in the interview. I hope this article provided a useful guide to mathematics at university. Let me know in the comments if you would like to learn more about how to prepare for specific aspects for the application process, such as STEP/MAT or interview preparation! Nitya Nigam If you keep up with the latest developments in mathematical research, you may have heard the term “topology” being thrown around, but might not know exactly what it is. Topology is a branch of maths that studies the properties of spaces that stay the same after continuous deformation. In topology, objects can be stretched and squeezed like rubber, but they cannot be broken, so it is sometimes called “rubber-sheet geometry”. Under these rules, a triangle can be deformed into a circle, but the number 8 cannot, as it has two holes in it. So, circles and triangles are topologically equivalent, but are distinct from figure 8s. In honour of Maryam Mirzakhani’s birthday (the first woman to win a Field’s Medal, awarded to her for her groundbreaking research in topology), I thought I would write an article about one of current mathematics’ most active research fields. Links to relevant external resources are provided throughout the article in case you would like to extend your knowledge. You may wonder why topology is relevant. It has only emerged as a distinct mathematical field relatively recently; most topological research has been done after 1900. However, graph theory, which studies the properties of spaces built up from networks of vertices, edges and faces, is a form of topology. Spaces in graph theory are considered identical if all the vertices are connected up in the same way, regardless of their layout; graphs which are structurally identical but are laid out differently are called isomorphic, and are topologically equivalent. Graph theory has a wide range of applications in computer science, such as modelling computer networks, but it can also be used to optimise road networks and analyse linguistic trends. Nonetheless, the modern topological research goes far beyond graph theory, and has applications in branches of physics like vector fields and string theory. One main subfield of topology is point set topology. It analyses the local properties of spaces, and is closely related to calculus. It generalises the concept of continuity from calculus to define topological spaces, so the limits of sequences can be considered. If distances can be defined in these spaces, they are called metric spaces. In some cases, the distance cannot be defined - if a space maintains its continuity after a deformation is applied, it is still fundamentally the same space, but its “size” will have changed, so the concept of distance makes no sense. Another area of topology is algebraic topology, which instead considers the global properties of spaces. It answers topological questions by converting topological spaces into algebraic objects such as groups and rings. For example, topological spaces like the torus and the Klein bottle, pictured below, can be distinguished from each other because they have different homology groups (an algebraic concept based on integrating surfaces). Similarly, other algebraic concepts can be used to analyse and research topological spaces. The final area of topology discussed here is differential topology, which studies spaces with some kind of smoothness associated with each point. In this field, the triangle and circle would not be equivalent to each other in terms of smoothness - the triangle has hard corners, whereas the circle has a continuously curved edge. Differential topology is particularly relevant in vector field physics, and is therefore used to study things like magnetic and electric fields. It is also helpful in describing the 4-dimensional space-time structure of our universe.

This is just an overview of the immensely complex and constantly evolving field of topology. If this article sparked your interest, you can follow the latest mathematical research explained simply at ScienceDaily. Let me know in the comments what parts of topology you find particularly intriguing, and what you’d like to read about next! |

Our AuthorsWe are high school and college students from around the world who are passionate about maths, and want to share that passion with others. Categories

All

|